The Ultimate Geospatial Data Validation Checklist

To paraphrase Stan Lee: With great data comes great responsibility. As industry professionals, part of that responsibility is ensuring our data is good quality. Depending on the nature of one’s work, making decisions based on bad data could have extreme ramifications.

Before trying to use a dataset for some important task, all aspects should be checked for completeness, correctness, consistency, and compliance. Good quality data means checking that it fulfills requirements, then repairing it where it doesn’t pass.

Below is a data quality checklist that can help you validate and repair your geospatial data. Validation steps will vary to some extent depending on the type of data (2D, GIS, raster, etc.), but this list will provide a good guideline. You can use manual verification and out-of-the-box tools to verify your data, or you can use FME to detect and repair problems automatically.

Check the schema

The first step is to make sure the data model, or schema, is correct for your destination system. This includes enforcing the correct:

☑ Feature type (i.e. layer, level, table, feature class) names.

☑ Attribute names and types.

☑ Coordinate system.

☑ Allowed geometries.

Check the data values

Check the content of the attributes, traits, features, rows, and/or other properties specific to your dataset.

☑ Is the data type correct for this field?

☑ Is the value within the valid range or part of a domain or enumerated list?

☑ Check for duplicates, for example of a unique key.

☑ Check for nulls. Are there mandatory values, or are null / empty values allowed? Are the null types consistent (NaN, infinity, empty strings, etc.)?

Validate the geometry

Invalid geometry comes in many forms. Problems might include:

☑ Self-intersections.

☑ Degenerate or corrupt geometries. For example, a donut without a hole, a multi without parts, end point locations of the defined arc inconsistent with the actual arc.

☑ Null geometries.

☑ Vertices with missing normals.

☑ In the case of geometry with a texture, check for associated texture coordinates.

☑ Invalid solid boundaries (could include unclosed boundaries, invalid projection, incorrect face orientation, unused vertices, free faces).

☑ Invalid solid voids. For example, a disconnected shell interior.

☑ Non-planar surfaces, i.e. vertices are not on the same plane in 3D space.

☑ Duplicate consecutive points, in 2D or 3D.

Compliance to standards

Sharing data usually means ensuring it meets a set of standards, or enforcing its compliance with an initiative. For example:

☑ OGC compliance includes checking for self-intersection, repeated points, unparsable geometry, etc.

☑ INSPIRE compliance involves a few things. Thankfully, we have a blog post and other resources to help you accomplish this.

☑ Other specific international standards or trade standards for data.

☑ Your company’s standards. For example, you might need to design your own tests to ensure your topology or attributes meet your established design constructs.

Format-specific QA/QC

You should perform quality checks that are tailored to the format of your destination system, especially if you’ve converted from another data type. Examples include:

☑ CAD data: ensure the robust extraction of layers, geometry, text, line types, blocks, extended entity data, etc.

☑ XML / JSON: validate the syntax or schema.

☑ Tabular data: ensure values pass logical tests; check integration with spatial details.

☑ Databases: check the data and geometry before attempting to load it into a central repository.

☑ Point clouds: check for correct components and values.

Workflow-based validation

If you work with data in an environment that demands a workflow—real time, self serve, automated uploads, or otherwise—your data would likely benefit from other validation techniques. For example:

☑ Detect differences in an updated version of the same data.

☑ Validate submitted data (via email, upload, directory watch, scheduled task) and immediately give feedback to stop bad data from being processed.

☑ Check that a submitted package contains the required files and formats, perform a schema check and a data-level check, then zip and encrypt the package for clients to download—as an example self-serve workflow. You may have other requirements.

Repairing and reporting bad data

Of course, after finding inconsistencies, errors, and/or poor compliance in any of the above checks, the next step is to repair the dataset where it doesn’t pass. Fixes might include:

☑ Map the schema to fit the destination data model.

☑ Geometry manipulation. For example: snap dangling lines closed, clip crossing lines, fill in slivers, remove spikes, bring vertex points of features together if they are within a certain distance of each other.

☑ Enforce compliance with your company’s standards. For example: remove duplicate features, filter out the wrong kind of geometry, test for specific attribute values or ranges of values.

☑ Simply flag the bad data and return it for human analysis.

No matter what the outcome of a validation check, an intuitive report should be created that can be shared with interested parties. The final steps are therefore:

☑ Measure and describe the quality of the data in a standardized way, e.g. data download or PDF.

☑ Send the report via email, SMS, form, etc.

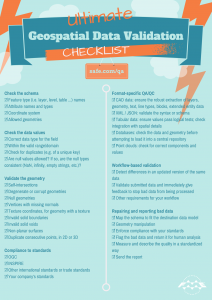

Printable graphic

Click the image to see the enlarged, printable version of The Ultimate Geospatial Data Validation Checklist.

Automatic quality control with FME

It’s easy to get overwhelmed by the size of the above list, but the important thing I want to stress here is that FME helps you do all of this automatically. For example, all geometry validation listed above can be done in one step using the GeometryValidator transformer, while custom checks against your own standards can also be set up using the intuitive Tester transformer. Browse these presentation slides to see exactly which transformers can help you validate different parts of the above checklist.

The following presentations and case studies provide some good examples of real-world data validation workflows.

- Colonial Pipeline automatically checks CAD drawings against their drafting standard, and provides a detailed log of errors and compliance issues.

- The Bureau of Land Management National Operations Center (NOC) assesses the accuracy of data and standardizes it.

- Metria AB created an automated quality control system, in the form of a data validation service that anyone can use from anywhere.

- INSER launched an FME Cloud based platform that offers data validation services online, for those who need a user-friendly way to process the format, coordinate system, data model, and quality of their data.

- Alberta Sustainable Resource Development created an automated, web-based CAD validation system.

What steps do you take to validate your data? What can you add to the above list?

FME Switch ad – inspired by Ellen Feiss